World models are like an internal map of how the world works that an AI builds by watching what happens next after actions, so it can imagine and plan ahead. No human labeling required—just raw video does the trick.

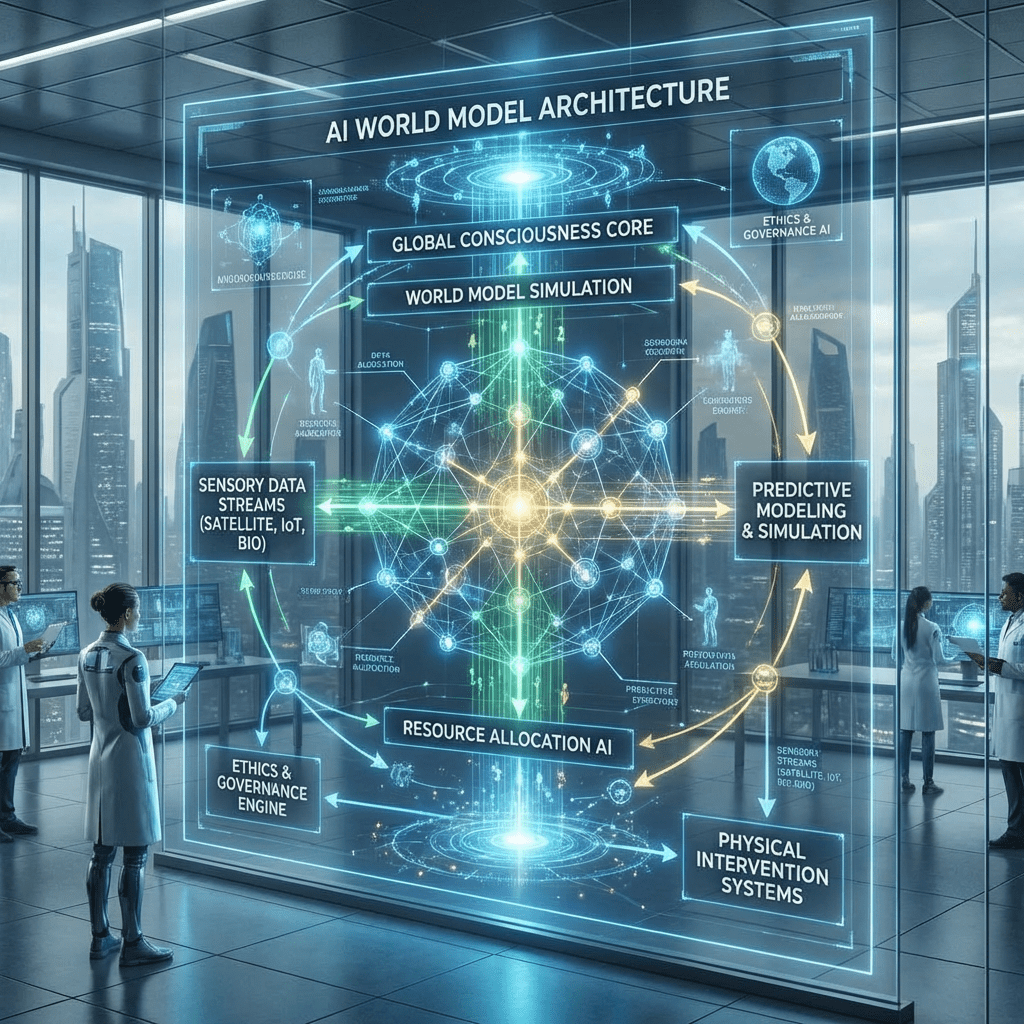

main architecture

Think of it as a smart game simulator inside the AI:

- You see something (like a rolling ball): The AI watches and summarizes it simply, like “ball moving right.”

- You remember the past (where the ball was before): It recalls its last “map update.”

- You imagine what happens next (if I kick it?): It guesses the future picture, like “ball flies left,” trying different “what if” endings to handle surprises.

- You learn from real life: By comparing its guesses to what actually happened, it gets better—like practicing free throws by checking if the ball went in.

No magic math; just “watch, guess, check, improve” on videos or robot senses instead of words. No labeling needed—the real video frame becomes the automatic “correct answer.”

Key Components

- The watcher (Encoder): Like eyes and brain turning messy video into simple notes: “car ahead, road curves left.” Ignores useless details like every leaf.

- The guesser (Predictor): Your “what if” daydreamer. Asks: “If I turn the wheel, does the car swerve safely?” Practices many futures in its head.

- Surprise handler (Latent variables): Life isn’t predictable, so it tries a few endings like “maybe the car stops, maybe it speeds up,” picking the smartest path.

- Learning engine (Energy-based models): A coach that rewards good guesses and fixes bad ones, using tricks like JEPA to keep things sharp and real.

How They Are Trained

Training is like teaching a kid to predict games by playing millions of short clips:

- Feed video clips + actions: Show sequences like “push cup → cup slides right.” No labels needed—it’s self-taught from real motion.

- Guess the next frame: AI imagines “what happens after?” and sketches a few possible futures (to cover surprises).

- Check against reality: Compare its guess to the actual next video frame. Good match? Keep it. Bad? Tweak the brain.

- Repeat endlessly: Use billions of YouTube videos, robot trials, or game footage. Add tricks to avoid lazy cheating (like always guessing “nothing changes”).

Key advantage: Needs far less GPU power than current LLMs From the description above, you understand no human interaction is involved during training—we don’t need massive numbers of underpaid people manually applying labels. We also drastically decrease the memory needed during training and usage, resulting in significant environmental improvement.

Over time, it learns physics rules (gravity pulls down) without being told, just by watching the world work. No human explanations—just raw experience.

Leave a comment